Beyond TAR

Why Test Uncertainty Ratio (TUR) / Cm is Essential for Modern Calibration and Risk Management

"Beyond TAR: Why Test Uncertainty Ratio (TUR) / Cm is Essential for Modern Calibration and Risk Management"

Abstract:

Metrologists have long used the Test Accuracy Ratio (TAR) to support various decision rules to help control risk by comparing the accuracy of the reference against the accuracy of what is being tested. Yet, as precision needs have grown, TAR has become increasingly inadequate for controlling measurement risk and reliability. That is because, by design, TAR may ignore contributions to Measurement Uncertainty that occur with each attempt to measure any quantity.

Many industries require a more comprehensive calibration approach that considers all Uncertainty sources. Certainly, most written standards mention establishing a metrologically traceable measurement chain. When Measurement Uncertainty is evaluated correctly, small and large companies may realize significant cost benefits varying from thousands to millions of dollars.

This paper discusses TAR's limitations and explains why the Test Uncertainty Ratio (TUR) or Measurement Capability Index (Cm) is a better tool for modern metrology.

We will discuss real-world examples, relevant standards like ISO/IEC 17025 and ANSI/NCSL Z540.3, and practical applications of TUR, Cm that demonstrate its superiority in managing risk.

Introduction:

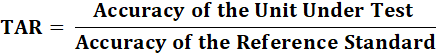

TAR is often defined as the ratio of the accuracy of the unit under test (tolerance, specification limits) divided by the accuracy of the reference standard (the standard used for the calibration or test).

For decades, the Test Accuracy Ratio (TAR) has been used as a quick and easy method to ensure that the reference standard used for calibration is more accurate than the equipment being calibrated. However, this approach often overlooks one key component: a complete Measurement Uncertainty analysis.

Calibration processes have evolved to meet increasingly stringent demands in some modern industries. Many companies and calibration laboratories now strive to maximize their decision-making accuracy while optimizing production yield. This includes improving the ability to correctly classify instruments that "pass" or "fail" during calibration, reducing unnecessary production rejections, and preventing defective products from reaching consumers.

While historically valuable, the traditional Test Accuracy Ratio (TAR) approach has become inadequate (not very cost-effective when all things are considered) for addressing these complex modern calibration challenges. For any champions continuing to push TAR, yes, TAR is not all bad. TAR may still be valuable early in selecting test and measuring equipment (T&ME). However, beyond using it as a possible tool to narrow down equipment purchases and possibly a quick validation tool for less risky measurements, there are inherent risks and flaws associated with using TAR to control your measurement risk.

TAR has many flaws, which are:

TAR only compares the accuracy of the unit under test with the accuracy of the reference standard.

It often omits critical Measurement Uncertainty contributors.

Users often confuse accuracy with measurement uncertainty, which includes contributions relating to precision.

Many manufacturers take shortcuts in published specifications, omitting significant error sources like reproducibility, resolution, and environmental factors, among other things.

Aggressively published marketing specifications for standards often use averages to mask extreme values when setting equipment tolerances. These specifications may be based on a single, unrepeatable achievement and fail to account for the real-world conditions in which the equipment is typically used.

Modern manufacturing and testing environments should prioritize comprehensive risk management methodologies to optimize yield. These approaches must extend beyond simple ratio calculations, ensuring measurement accuracy, precision, and operational efficiency.

Unless your product experiences overwhelming demand that significantly exceeds supply, the financial impact of adopting advanced risk management strategies in today's economic climate extends far beyond basic metrics. These strategies are crucial for maintaining a competitive edge and ensuring long-term success. However, even companies with highly sought-after products can benefit from enhanced risk management, driving greater efficiency and profitability. Anyone looking for more proof of this should research American production after World War II, as there are countless examples where the growing baby boomer population consumed everything that several manufacturers made.

Early risk detection significantly reduces unplanned downtime, prevents costly regulatory breaches, and minimizes post-release retrofits to try to make something work ("band-aid mentality"). Organizations that adopt these advanced frameworks achieve significant operational cost savings by minimizing production rejections, reducing equipment maintenance expenses, and preventing supply chain disruptions.

This paper provides examples and discusses a superior (less risky) approach to implement for those still using TAR. The less risky approach uses the Measurement Capability Index Cm, or the correct Test Uncertainty Ratio (TUR) definition. We will explore the benefits of using TUR or the Measurement Capability Index Cm to help with your implementation of complying with standards ISO/IEC 17025, ISO 10012, ISO/IEC 17034, ISO 9001, IATF 16949, ANSI/NCSL Z540.3 (withdrawn, yet still required by several organizations), and others.

Note: The Test Uncertainty Ratio (TUR) is defined fully in the ISO/IEC 17025, and the Measurement Capability Index is outlined in JCGM 106:2012, "Evaluation of Measurement Data." Although these terms are used interchangeably as they are mathematically equivalent, they are cited differently across industries. In the calibration sector, professionals might be more familiar with TUR or Cm. At the same time, those in manufacturing often refer to the Measurement Capability Index Cm, which is also known as a process capability index.

TAR: A Legacy System with Major Flaws

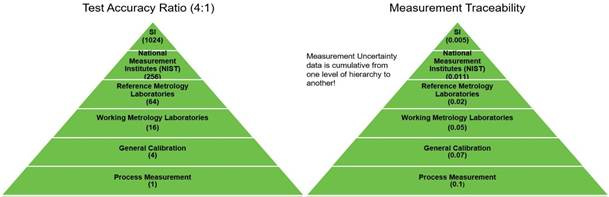

Figure 1 The above images are courtesy of Paul Reese [1].

Many earlier standards, such as MIL-C-45662 (1960), specifically in section 3.1.5, mandated a 10:1 Test Accuracy Ratio (TAR). MIL-C-45662 (Calibration System Requirements), as well as general requirements for calibration systems to ensure measurement accuracy in military and contractor facilities. Similarly, MIL-HDBK-52 emphasized the importance of measurement accuracy and changed the 10:1 TAR requirement to a 4:1 or 10:1 TAR. Throughout the decades, many organizations have adopted the 10:1 TAR or 4:1 TAR as best practice, even though it wasn't always a strict requirement.

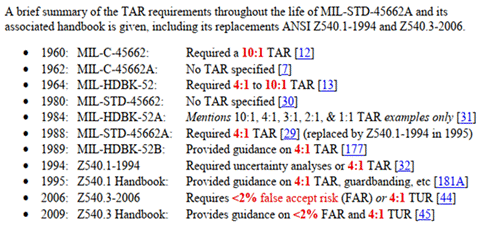

In the 1980s, MIL-STD-45662A: Calibration System Requirements(1988) was released. Section 5.2 states, "Unless otherwise specified in the contract requirements, the collective uncertainty of the measurement standards shall not exceed 25 percent of the acceptable tolerance for each characteristic being calibrated[2]." This means that the standard required more than just the simple 4:1 TAR and was more in line with the TAR formula below(since 25 % of the tolerance corresponds to a 4:1 ratio, closer to TUR's definition). ANSI/NCSL Z540.1-1994: Calibration Laboratories and Measuring and Test Equipment—General Requirements later replaced the MIL-STD-45662A.

ANSI/NCSL Z540.1-1994 could have been the first standard to clearly define the term Test Uncertainty Ratio (TUR), as evidenced by section 10.2(b), which states, "The collective uncertainty of the measurement standards shall not exceed 25 % of the acceptable tolerance (e.g., manufacturer's specification) for each characteristic of the measuring and test equipment being calibrated or verified [3]." However, this goes back to many confusing Measurement Uncertainty with measurement accuracy. Even the ANSI/NCSL Z540-1-1994 Handbook editor confused TUR with TAR.

The ANSI/NCSL Z540-1-1994 Handbook defines the formula for TAR.

The ANSI/NCSL Z540-1-1994 Handbook notes that a default alternative to an uncertainty analysis is a TAR of 4:1, meaning the tolerance of the parameter being tested should be at least four times the combination of uncertainties of all measurement standards used in the test. On page 37, the handbook mentions that some refer to TAR as Test Uncertainty Ratios (TURs).

Note: It is the opinion of many that this potential blunder is what has led to mass confusion in the metrological field that has plagued many for decades.

Imagine sending equipment to suppliers (Suppliers A and B). Supplier A uses the older definition of TAR, and Supplier B uses the ANSI/NCSL Z540-1-1994 Handbook definition. The likely amount of risk is vastly different as the measurement risk from Supplier A will be subject to how well the manufacturer categorized their accuracy. At the same time, Supplier B will have calculated Measurement Uncertainty(hopefully correctly!!!) for their standard(s).

Fortunately, ANSI/NCSL Z540.3 was released in 2006, and in 2009, the ANSI/NCLSI Z540.3 Handbook further explained TUR and various decision rules to control risk.

The shift from TAR to TUR signifies a broader move towards greater precision and reliability in calibration processes, which is essential for reducing measurement risks. As we assess the history and implications of these definitions, it becomes evident that transitioning from dated (TAR)to modern approaches (TUR / Cm) to evaluating measurement risk requires more than just altering technical specifications. This evolution is crucial for ensuring safety and optimizing quality.

1. Origins of TAR

The Test Accuracy Ratio originated in the mid-20th century, primarily as a simple way to ensure that measurement devices were accurate enough for their intended application. Under the MIL-STD-120 standard, the U.S. military adopted the 4:1 TAR ratio as part of their procurement process. Many contracts required the calibration standard to be four times more accurate than the calibrated device. At the time, this approach was practical. Systems were more straightforward, and precision was less stringent.

One of its early proponents, Jerry L. Hayes, working at the U.S. Naval Ordnance Laboratory, recognized the increasing complexity of missile systems and the need for more reliable measurements. TAR was introduced as a quick and efficient method for evaluating calibration processes, particularly under the constraints of time, which included limited computational power and less sophisticated measurement systems.

TAR was designed to compare the accuracy of the reference standard to the unit under test (UUT), ensuring that the calibration standard was at least four times more accurate than the equipment being calibrated. This approach worked well for the simpler systems of the mid-20th century, where precision tolerances were more relaxed and risk management was less complex. However, it was intended as a temporary fix to manage risks until better methods or computational tools became available. That time has arrived long ago, yet many still use TAR [4].

2. Why TAR Was Effective at the Time

The rationale behind TAR was based on a need for quick decision-making in environments where time and resources were limited, such as military operations. The U.S. Navy, dealing with missile testing and other high-stakes applications, needed to ensure that calibration instruments provided accurate results without overburdening the system with complex uncertainty analyses. TAR offered a simple, easy-to-implement solution that fit the immediate needs of the time.

In its early days, TAR worked well enough because it provided a quick way to judge whether equipment met basic requirements. Calibration in the 1950s and 1960s was far less complex than today, and TAR was sufficient for those standards. However, TAR's limitations became more evident as industries grew more demanding. By the 1980s and 1990s, sectors like aerospace, pharmaceuticals, and electronics required tighter tolerances and higher precision.

It became evident that while TAR could only guarantee basic accuracy, it was insufficient alone and needed to incorporate a wider range of factors that contribute to measurement uncertainty, including environmental conditions, the resolution of the equipment, repeatability, equipment degradation over time, and more.

The Limitations of TAR in Today's Metrology

1. Ignoring Uncertainty: The Core Problem with TAR

The biggest issue with TAR is that it doesn't correctly account for measurement uncertainty. TAR compares the accuracy tolerance of a Unit Under Test (UUT) with that of a calibration standard. TAR often fails to account for environmental factors, operator errors, equipment resolution, and equipment repeatability, which can introduce Uncertainty into measurements. Today, it remains a good rule of thumb for any particular measurement instance, yet it fails completely when we consider Measurement as a complete process.

For example, let's say you're using a micrometer to measure a shaft with a tolerance of ±0.015 mm. If the calibration standard has a tolerance of ±0.001 mm, the TAR may be calculated to be 15:1, which looks good on paper. Yet what happens when you factor in the resolution of the micrometer or environmental changes like temperature? Likely, 15:1 becomes about 1.5:1. Without accounting for these significant contributions to measurement uncertainties, you risk making a bad measurement decision!

2. TAR Provides a False Sense of Security

One of the dangers of relying on TAR is that it can create a false sense of confidence because it is too simple by design. You might believe your Measurement is accurate because the ratio looks favorable, yet you could overlook significant risks without considering measurement uncertainty. This is particularly problematic in industries where precision is critical, like aerospace, where a minor calibration error could lead to equipment failure, or in pharmaceuticals, where slight deviations in measurements could compromise safety.

3. The Failure to Meet Modern Standards

International standards like ISO/IEC 17025 and ANSI/NCSL Z540.3 moved away from TAR as a primary measurement tool because it doesn't provide a complete picture of the risks involved. ISO/IEC 17025, for instance, emphasizes the importance of Measurement Uncertainty and requires that all uncertainties be documented and considered in calibration processes. Measurement Capability Index, not TAR, is aligned with these modern requirements because it evaluates the measurement uncertainty of the measurement process.

4. Requiring an Arbitrary Number Like 4:1 is not Sustainable

Test Accuracy Ratio (TAR) is outdated, as it does not correctly capture measurement risk in many applications and industries. It is certainly not sustainable to propagate from process measurement back to SI.

TUR (Measurement Capability Index): For Better Risk-Based Decisions

A significant advantage of the Measurement Capability Index is that it checks more factors that can affect Measurement Uncertainty than TAR does. This is important to establish metrological traceability, which means being able to link a measurement back to a trusted standard through a clear, documented process.

The International Vocabulary of Metrology (VIM) defines metrological traceability as the "property of a measurement result whereby the result can be related to a reference through a documented unbroken chain of calibrations, each contributing to the measurement uncertainty." [4].

Note: In many cases, applying TAR does not meet this definition. This is important as many standards require measurements to be metrologically traceable, and many of those who use TAR likely fail to meet the definition.

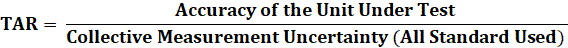

1. Understanding TUR: What It Is and How It Works

Test Uncertainty Ratio (TUR) takes a more comprehensive approach to Measurement Uncertainty by comparing the UUT's tolerance to the Expanded Uncertainty of the measurement process. Unlike TAR, which looks solely at accuracy, TUR incorporates many contributors to Uncertainty—environmental factors, equipment repeatability, equipment resolution, and even human error.

Understanding the Test Uncertainty Ratio (TUR) is the first step in weighing the significance of the claims proposed in this paper.

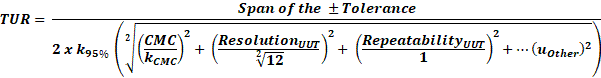

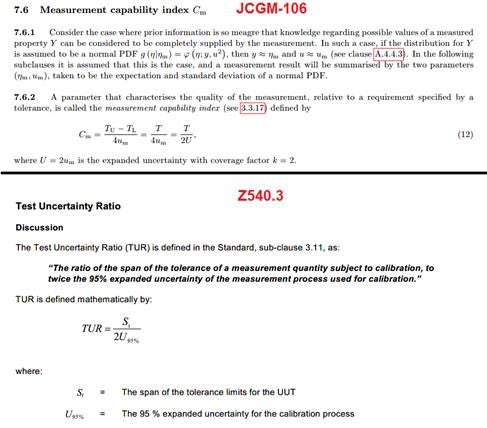

TUR is defined in ANSI/NCSL Z540.3 as:

● The ratio of the span of the tolerance of a measurement quantity subject to calibration to twice the 95 % expanded uncertainty of the measurement process used for calibration [6].

The Measurement Capability Index, often referred to as Cm, is a metric used in metrology to evaluate how well a measurement process or system can meet specified tolerances relative to the measurement uncertainty. According to JCGM 106, this index assesses the ratio between a given measurement's specified tolerance or specification limit and the combined standard Uncertainty of that measurement process.

From ANSI/NCSLI Z540.3 Handbook.

ANSI/NCSL defines TUR by stating, "For the numerator, the tolerance used for Unit Under Test (UUT) in the calibration procedure should be used in the calculation of the TUR. This tolerance is to reflect the organization's performance requirements for the Measurement & Test Equipment (M&TE), which are, in turn, derived from the intended application of the M&TE. In many cases, these performance requirements may be those described by the manufacturer's tolerances and specifications for the M&TE and are therefore included in the numerator [6]."

In most cases, the numerator is the UUT Accuracy Tolerance. The denominator is slightly more complicated. Per the ANSI/NCSL Z540.3 Handbook, "For the denominator, the 95 % expanded Uncertainty of the measurement process used for calibration following the calibration procedure is to be used to calculate TUR. The value of this uncertainty estimate should reflect the results that are reasonably expected from the use of the approved procedure to calibrate the M&TE. Therefore, the estimate includes all components of error that influence the calibration measurement results, which would also include the influences of the item being calibrated except for the bias of the M&TE. The calibration process error, therefore, includes temporary and non-correctable influences incurred during the calibration such as repeatability, resolution, error in the measurement source, operator error, error in correction factors, environmental influences, etc. [5]."

From JCGM 106

The formula for the Measurement Capability Index is:

Cm=T/U

Where:

● T is the specified tolerance (i.e., the total permissible range within which the measurement results must fall),

● U is the expanded Uncertainty of the Measurement, usually calculated at a 95 % confidence level.

A higher Cm value indicates that the measurement process can meet tight tolerances relative to its Uncertainty. Values greater than one are generally considered acceptable, as they show that the measurement system can accurately produce results within the tolerance limits, with enough margin to account for uncertainties [7].

Calculating TUR/ Cm is crucial because it is a commonly accepted practice when making a statement of conformity. One can calculate measurement risk during calibration when combined with the measurement location. TUR is clearly defined in standards such as ANSI/NCSL Z540.3 and the ANSI/NCSL Z540.3 Handbook. However, equipment manufacturers participate in the practice of writing unique standards that favorably market their products. In reality, the products' application may vary, and acceptance requirements may change depending on the application. Therefore, failing to adhere to clearly defined, universal standards can put customers or consumers at increased risk.

The end-user should consider accreditation bodies' recommended requirements for contributors to Measurement Uncertainty to aid in calculating the optimal TUR / Cm.*

* Note: This assumes the end-user employs a decision rule based on TUR / Cm. While several methods exist to calculate measurement decision risk, this paper primarily compares TAR with TUR. The discussion should center on the Measurement Capability Index (Cm). TUR has often been incorrectly used interchangeably with TAR, leading to confusion. This issue does not arise with the Measurement Capability Index (Cm), which makes it a more suitable term for precise and clear discussions in this context.

One may argue that the reference standard uncertainty and environmental factors are the only requirements for the TUR calculation to make a conformity assessment decision. Make no mistake: TUR is defined and agreed upon in ANSI/NCSL Z540.3:2006 and the ANSI/NCSL Z540.3 Handbook. Therefore, it should not be a point of debate.

The definition of CPU (Calibration Process Uncertainty or Expanded Measurement Uncertainty of the Calibration Process) establishes whether the calibration laboratory calibrating the equipment will consider relevant uncertainty contributors to the customer's device. If a calibration laboratory does not include these uncertainty contributors, it passes the risk on to the customer or consumer because it prefers not to retain the risk. This is often done without the end-user's knowledge.

The end-user must know the risk that is acceptable to them. For example, suppose they want to maintain less than 2 % PFA (Probability of False Accept) using a global risk calculation and need more reliability data. In that case, we know the TUR needs to be 4.6:1 or greater.

Note: The 4.6:1 comes from a paper Paul Reese wrote for NCSLI titled Risk Mitigation Strategies for Compliance Testing. It is beyond the scope of this paper to discuss all of the various decision rules, including global and specific risk decision rules. A decision rules guidance document has been written that tries to convey these topics better and can be downloaded here (https://mhforce.com/wp-content/uploads/2024/04/Decision-Rule-Guidance-1st-Edition-V1.1.pdf)

2. Real-world applications of TUR/Cm

Figure 2 Morehouse Load Cell and Gauge Buster (UUT Example), which is used to Measure Force

Let's look at a typical example from the field. Imagine you're calibrating a 1000 kgf load cell. TAR might give you a favorable ratio—say 4:1 or better—but that doesn't tell you the whole story. When you switch to TUR / Cm, you account for the load cell's resolution, repeatability, environmental conditions, and more. In this case, if you ignore these factors, you might miss critical sources of error. When TUR / Cm is applied, you get a much more accurate picture of whether the calibration is reliable.

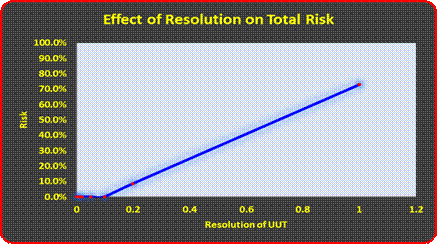

3. Notice the formula for TUR / Cm includes the resolution of the UUT.

If we go back to our load cell example, as the resolution of the device increases, so does the overall Measurement Uncertainty. The figure below shows the relationship between resolution and Expanded Uncertainty. When the resolution is 0.001 kgf, it is insignificant. At 0.01 kgf, it is 11.52 % of the overall budget, and when raised to 0.05 kgf, it becomes dominant.

Figure 3 Resolution and the Effect on Total Risk Using a 1 000 kgf Morehouse Load Cell and Varying the Indicator Resolution (No repeatability)

Figure 4 Resolution as a Percentage of the Total Measurement Uncertainty Using a 1000 kgf Morehouse Load Cell and Varying the Indicator Resolution

To establish metrological traceability, you must account for and correctly report Measurement Uncertainty. If you are a calibration lab that requires following ILAC P14:09/2020, which states, "Contributions to the Uncertainty stated on the calibration certificate shall include relevant short-term contributions during calibration and contributions that can reasonably be attributed to the customer's device. Where applicable, the Uncertainty shall cover the same contributions to Uncertainty that were included in evaluation of the CMC uncertainty component, except that uncertainty components evaluated for the best existing device shall be replaced with those of the customer's device. Therefore, reported uncertainties tend to be larger than the Uncertainty covered by the CMC [8]."

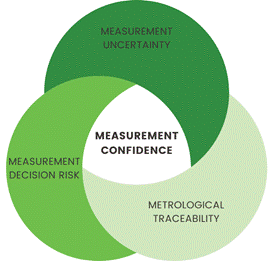

Figure 5 Measurement Confidence

To achieve measurement confidence, three pillars of Measurement must be satisfied.

These are:

1. Measurement Uncertainty: This involves the robust (thorough) evaluation of Measurement Uncertainty, as it supports the following two pillars of Measurement. It refers to the extent to which measurements vary and estimates the confidence in a measurement result. It's crucial for decision-making, as it helps users understand the reliability of a measurement.

2. Metrological Traceability: This involves ensuring that measurements are traceable to the International System of Units (SI), creating a documented, unbroken chain of calibrations, each contributing to the measurement uncertainty. In some cases, metrological traceability is indirectly traceable to the SI (e.g., test measurement uncertainty).

3. Measurement Decision Rules: Decision rules determine whether a measurement result is fit for its intended purpose, considering Measurement Uncertainty(more justification for robust evaluation of measurement uncertainty). These rules should consider acceptable risks (depending on the application), ensuring that measurement results are used to make conformity decisions. TAR is highly discouraged in this paper as it does not fully consider measurement uncertainty, and TUR / Cm is encouraged to use a Measurement Decision Rule.

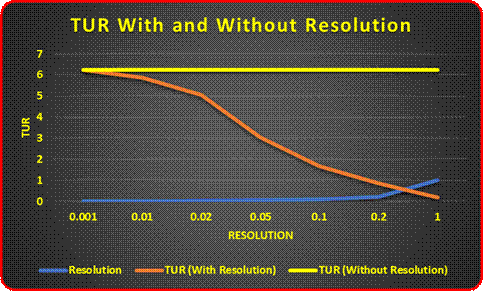

Figure 6 TUR with and without UUT Resolution

When the resolution is considered, the TUR starts at 6.25:1 with a UUT resolution of 0.001 kgf and then declines to 0.17:1 with a UUT resolution of 1.0 kgf. When the resolution is not accounted for, the TUR ratio stays at 6.25:1 regardless of the resolution. If a calibration laboratory uses TAR or similar ratio methods, the conformity assessment could ignore UUT's resolution.

Also, in many cases, the only thing that separates one operator from another is the ability to distinguish the resolution. I might say something is on the line while someone else calls it over it. Such is the case when reading mechanical instruments. Even with digital instruments, if the last digit is flickering between 0 and 1, one might say it leans more towards 0, and another person may choose 1.

4. TAR is not sustainable.

Figure 7 TAR versus TUR, illustrating how TAR is not sustainable.

The two pyramids demonstrate the stark contrast between theoretical Test Accuracy Ratio (TAR) and real-world measurement traceability in calibration systems. While the left pyramid shows an idealistic TAR model, with each level being four times more accurate than the previous one (from 1 to 1024), the right pyramid reveals how Measurement Uncertainty accumulates through the calibration chain (from 0.005 to 0.1).

The traditional 4:1 TAR approach proves impractical because real-world measurements face cumulative uncertainties, environmental factors, and instrumental limitations that make maintaining such precise ratios throughout the chain unsustainable. In this example, the NMI would need a measurement process that is 256 times greater than the process measurements used in the General Industry. Maybe this is possible for some measurement parameters, though it's impossible for most of them.

Note: These pyramid comparisons made five levels of hierarchy from general industry to the NMI; in some cases, there may be more or less.

Why Switching to TUR (Measurement Capability Index) Is Crucial for Modern Metrology

1. TUR /Cm When Compared to TAR Reduces Measurement Risk

In today's precision-driven industries, the stakes are high. A minor error in calibration can result in equipment failure, safety hazards, or non-compliance with regulatory standards. TUR / Cm provides a more accurate risk assessment by incorporating the appropriate uncertainty contributors. It doesn't just tell you whether a device is accurate; it tells you whether you can trust that Measurement based on the conditions under which it was made.

2. TUR / Cm Better Aligns with International Standards

As mentioned earlier, international standards like ISO/IEC 17025, ISO 10012, ISO 9001, and related quality standards emphasize the importance of Uncertainty in calibration processes. These standards require that Uncertainty be factored into every measurement decision. TUR / Cm is the tool that allows you to meet these standards while also reducing your measurement risk.

The paper TAR vs. TUR: Reasons to Upgrade to TUR argues that the Test Uncertainty Ratio (TUR) is a more effective metric than the Test Accuracy Ratio (TAR) because it considers all sources of measurement error, not just instrument accuracy. This transition, guided by international standards like ISO/IEC 17025, promotes a more thorough understanding of measurement uncertainty. Author Howard Zion states, "An even more compelling reason to move to TUR, and specifically uncertainties, is that this enables anyone affected by a false accept (consumer risk) or false reject (producer risk) condition, found within the indeterminate region, to quantify this risk and understand how it affects the measurement [9]." By implementing TUR, organizations can better evaluate and mitigate risks, leading to more informed decision-making in calibration and quality assurance.

3. Consider what happens in industries such as aerospace, medical devices, and pharmacological sciences.

Consider the aerospace industry, where even a minor calibration error can have catastrophic consequences. In this field, relying solely on TAR is insufficient. By accounting for sources of measurement uncertainty, TUR/ Cm ensures that critical components are calibrated to the highest standards, minimizing the risk of failure during operation.

TUR can make the difference between life and death in the medical device industry, where precision is equally crucial. Devices like infusion pumps and pacemakers must be calibrated with the utmost accuracy. TUR's comprehensive risk assessment ensures reliable measurements, protecting patients and manufacturers from costly recalls.

In 21 CFR §211.68(a) under Current Good Manufacturing Practice for Finished Pharmaceuticals, the FDA states: "If such equipment is so used, it shall be routinely calibrated, inspected, or checked according to a written program designed to assure proper performance. Written records of those calibration checks and inspections shall be maintained [10]."

While the regulation establishes fundamental risk mitigation measures, it does not explicitly address the distinction between Test Accuracy Ratio (TAR) and Test Uncertainty Ratio (TUR). However, TUR offers a more comprehensive risk management approach than TAR by accounting for more sources of Measurement Uncertainty rather than just instrument accuracy.

Organizations that adopt TUR-based calibration strategies are better positioned to meet regulatory expectations, reduce citations, and ensure greater measurement reliability. More importantly, this shift enhances pharmaceutical quality control, ultimately helping deliver the proper medication doses to millions of people with greater accuracy and confidence.

4. Cost Savings Example

Some may be thinking of what all this means in terms of cost.

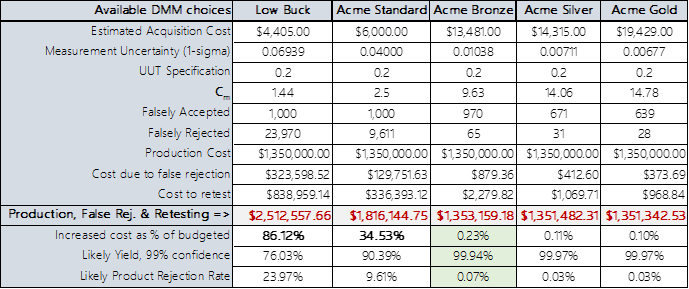

Figure 8 Cm of Various Instruments and cost courtesy of Greg Cenker

Figure 8 illustrates the potential cost savings achieved by adopting TUR/Cm-based calibration practices compared to traditional TAR methods. Using TAR may allow someone to evaluate equipment at the initial procurement stage, yet it might also come with hidden risks. If TAR is the only metric used, it could be very costly. Remember the earlier discussion on significant cost benefits, varying from thousands to millions of dollars?

Using Test Uncertainty Ratio (TUR) instead of Test Accuracy Ratio (TAR) can save a company hundreds of thousands of dollars in unnecessary testing and scrap costs.

Suppose a manufacturer needs to produce resistors with a tight tolerance of ± 0.2 ohms. The production cost is fixed at $1,350,000.00.

They decided on a mid-tier digital multimeter (DMM) Acme Standard with a TAR of 4:1, which is good enough. However, this common approach did not account for total measurement uncertainty, leading to false rejections and high retesting costs. The actual TUR / Cm was only 2.5:1.

The result was a total production cost of $ 1,816,144.75

Considering TUR, which factors in a more complete measurement uncertainty, they could have purchased better test equipment upfront. This would have reduced unnecessary scrap and retesting, improved yield, and saved money in the long run. The cost would have been $ 1,351,342.53 if they purchased the Acme Gold.

The difference in equipment cost would have been $13,492.00, and the difference in savings from false rejection and retesting would have been $464,802.22 for the period in question. If this were a monthly production yield, one could do the math.

Regarding PFA, PFR, and material yield, TUR is a better approach than TAR, as it provides a more accurate risk assessment, leading to lower costs and higher production efficiency.

Recommendations for TUR / Cm Implementation

Transition Roadmap

Audit existing processes: Identify TAR-dependent calibrations with high scrap/rework rates.

Train personnel: Metrologists should understand uncertainty analysis and measurement risk. If you cannot find a metrologist, an organization such as NCSLI offers many workshops, webinars, training, and other references. Many qualified subject matter experts (consultants) can help.

Update procedures: Align with ISO/IEC 17025 and the appropriate guidance documents for your discipline.

Software integration: Deploy tools for automated TUR calculation, like Indysoft and template-based uncertainty budgets, or use tools like SunCal to perform measurement risk analysis.

Conclusion: Reflecting on TAR and Risk

As we've seen, the Test Accuracy Ratio (TAR) was developed in an era when the need for quick, efficient calibration methods outweighed the ability to factor in complex uncertainties. It was a simple and effective solution for its time. However, relying solely on TAR in today's precision-driven industries can expose organizations to significant risk. TAR ignores critical contributors to Uncertainty—factors like environmental conditions, operator variability, instrument repeatability, and resolution—that can lead to incorrect conformity decisions.

Consider the implications of these oversights: How confident can you be in your measurements if you aren't accounting for all potential sources of error? What risks are you unknowingly accepting by using TAR, which offers only a partial view of measurement accuracy? Even minor miscalculations can have catastrophic consequences in high-stakes sectors such as aerospace, medical devices, and pharmaceuticals.

TUR / Cm, on the other hand, addresses these challenges head-on by integrating all sources of Uncertainty into the calibration process. It provides a much clearer picture of measurement reliability, helping to ensure that decisions are based on accurate, comprehensive data. In light of modern standards like ISO/IEC 17025 and ANSI/NCSL Z540.3, which emphasize the importance of Uncertainty, the question becomes: Can you afford to stick with TAR when better tools like TUR / Cm are available?

Ultimately, it comes down to risk management. While TAR may still be used for convenience or tradition, the risks associated with its limitations can no longer be overlooked. The move to TUR / Cm is about improving accuracy and ensuring safety, reliability, and compliance in an increasingly demanding metrological landscape.

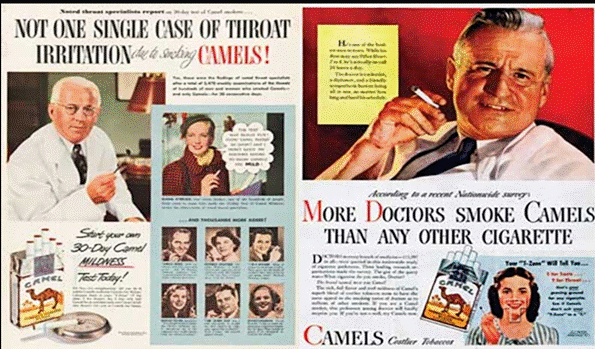

And for those who want to keep insisting on TAR, let us remember what else was said to be safe in the 1950s.

Who knew that George C. Scott was a doctor who smoked?

References:

[1] Paul Reese, "Calibration in Regulated Industries: Federal Agency Use of ANSI Z540.3 and ISO/IEC 17025," NCSLI Workshop & Symposium, 2021.

[2] Department of Defense, MIL-STD-45662A: Calibration System Requirements, 1988.

[3] American National Standards Institute/National Conference of Standards Laboratories (ANSI/NCSL), ANSI/NCSL Z540.1-1994: Calibration Laboratories and Measuring and Test Equipment—General Requirements, 1994.

[4] Scott M. Mimbs, "Measurement Decision Risk – The Importance of Definitions," Fluke Calibration, 2013.

[5] Joint Committee for Guides in Metrology (JCGM), International Vocabulary of Metrology—Basic and General Concepts and Associated Terms (VIM), JCGM 200:2012.

[6] American National Standards Institute/National Conference of Standards Laboratories International (ANSI/NCSLI), ANSI/NCSLI Z540.3 Handbook: Requirements for the Calibration of Measuring and Test Equipment, 2006 (Handbook Published 2009).

[7] Joint Committee for Guides in Metrology (JCGM), JCGM 106:2012 - Evaluation of Measurement Data—The Role of Measurement Uncertainty in Conformity Assessment, 2012.

[8] International Laboratory Accreditation Cooperation (ILAC), ILAC P-14:09/2020 - Policy for Uncertainty in Calibration, Sept 2020.

[9] Howard Zion, "TAR vs. TUR: Reasons to Upgrade to TUR," NCSLI Measure Journal, 2016.

[10] U.S. Food and Drug Administration, 21 CFR § 211.68 - Automatic, Mechanical, and Electronic Equipment, Code of Federal Regulations, 2025 Edition.

Thanks to Dilip Shah, Greg Cenker, Stephen Puryear, Paul Reese, and Ryan Kelly.

Appendix A

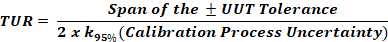

Additional Information supporting TUR is mathematically identical to Cm.

Figure 9 Definitions of both TUR and Cm